How to Eliminate Pipeline Friction in AI Model Serving

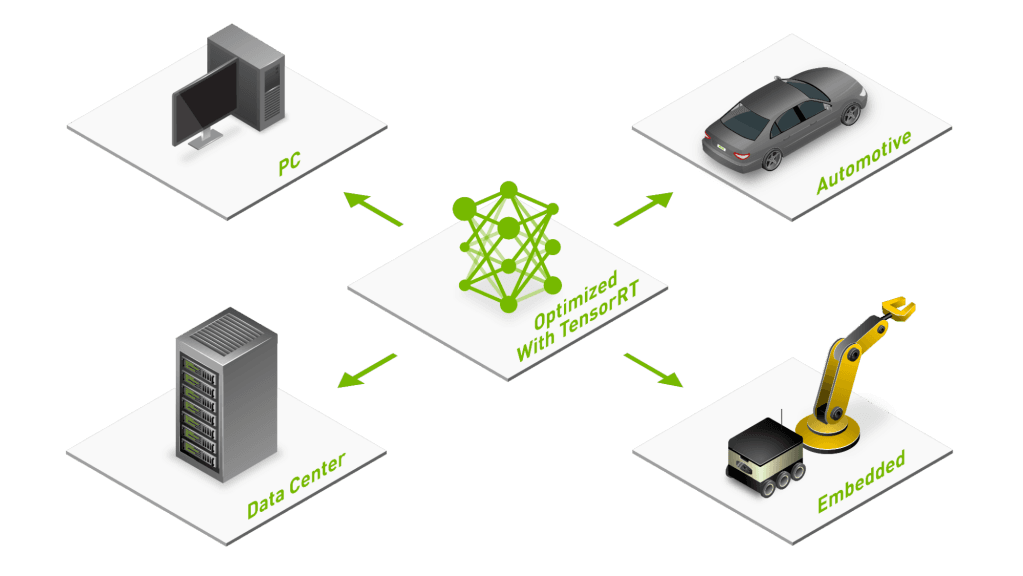

The path from a trained AI model to production should be smooth, but rarely is. Many teams invest weeks fine-tuning models, only to discover that exporting to a deployment format breaks layers, input shapes cause runtime failures, or version mismatches silently degrade performance. These issues are collectively known as pipeline friction, and they cost organizations time, money, and competitive advantage. This post provides actionable best practices for eliminating the most common sources of friction in AI model serving pipelines. The results are concrete: APIs respond faster under real traffic. Each GPU carries more requests. Scaling up for peak hours is a smooth, low-stress effort. Cost per inference drops. And the deployments themselves stop being the part of every release that breaks. What is pipeline friction in AI model serving? Pipeline friction refers to any obstacle that slows or disrupts the journey of a model from training to production inference. Unlike bugs that produce clear error messages, friction often manifests as subtle inefficiencies: a model that consumes twice the expected GPU memory, for example, or an inference server that drops requests under load, or a deployment that works on one GPU architecture but fails on another. The most frequent sources of pipeline friction can be grouped into four categories: - Model export issues: These arise when converting from training frameworks like PyTorch or TensorFlow into optimized inference formats - Unsupported operations: Custom or recently introduced layers are not recognized by the target runtime - Dynamic input sizes: Cause shape mismatches or force unnecessary recompilation - Version mismatches: Silent failures or performance regressions are introduced by mismatches between libraries, drivers, and hardware Each category requires specific tools and techniques. A mature ecosystem of solutions exists, and applying them systematically can eliminate the vast majority of friction before it reaches production. The following sections will detail each of these categories, along with a few more ways to minimize pipeline friction. How to solve model export issues Most teams train in PyTorch or TensorFlow, then export to ONNX as an intermediate representation before optimizing with NVIDIA TensorRT. This conversion step is where many problems surface: unsupported dynamic control flow, operations lacking ONNX equivalents, and tensor shape mismatches between what the training framework produces and what the export tool expects. Best practice 1: Validate exports early and often. Build export validation into your CI/CD workflow so every model checkpoint is tested for exportability. This approach catches problematic architectural decisions before they become embedded in your codebase. Best practice 2: Use versioning of ONNX operator sets deliberately. ONNX supports multiple operator set versions. Newer operator sets support more operations but may not be compatible with older runtimes. Pin your operator set version explicitly and document why. When upgrading, test thoroughly against your target inference runtime. Best practice 3: Simplify your model graph before export. Remove training-only components like dropout layers, auxiliary loss heads, and debugging hooks. Use graph optimization passes to fold batch normalization and eliminate redundant operations. A cleaner graph exports more reliably and runs…