Navigating EU AI Act requirements for LLM fine-tuning on Amazon SageMaker AI

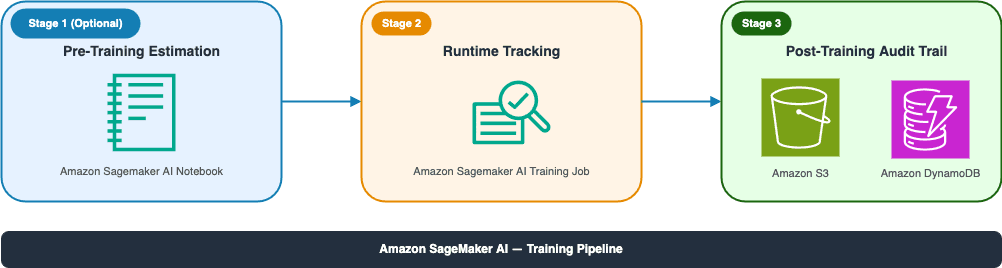

Artificial Intelligence Navigating EU AI Act requirements for LLM fine-tuning on Amazon SageMaker AI The EU AI Act requires organizations fine-tuning large language models (LLMs) to track computational resources measured in floating-point operations (FLOPs) to determine compliance obligations. As customers increasingly fine-tune LLMs for domain-specific use cases, we hear a common question: how do I know if my training job triggers new regulatory obligations? Amazon SageMaker AI provides a managed machine learning (ML) service for building, training, and deploying models. This solution uses Amazon SageMaker Training jobs to run fine-tuning workloads on fully managed infrastructure. SageMaker Training jobs handle resource provisioning, scaling, and cluster management, with built-in support for distributed training, integration with AWS CloudTrail and Amazon CloudWatch for governance, and automatic decommissioning of compute resources after training completes. The Fine-Tuning FLOPs Meter extends these capabilities with purpose-built compliance tracking that integrates into your existing SageMaker AI pipelines. In this post, we show you how to set up FLOPs tracking during LLM fine-tuning using the open source Fine-Tuning FLOPs Meter toolkit on Amazon SageMaker AI. You learn how to determine your compliance status with a single configuration flag and generate audit-ready documentation. EU AI Act and FLOPs tracking requirements On August 2, 2025, the EU AI Act introduced new requirements for organizations working with general-purpose artificial intelligence (GPAI) models. If you’re fine-tuning an LLM, you must determine whether your modifications reclassify you from a downstream user (an organization that uses an existing model without substantial modification) to a GPAI model provider (an organization legally responsible for a model’s compliance). The classification depends on how much compute your fine-tuning consumes, measured in FLOPs. The one-third rule distinguishes between minor modifications and substantial retraining. The rationale behind the 30% threshold: regulatory analysis determined that using more than one-third of the original training compute typically results in significant behavioral changes to the model, effectively creating a new model with different risks that warrant full provider obligations. Most organizations use scenario 2 in the following table because model providers rarely publish exact training FLOPs. Unless you have documented pretraining compute from your model provider, the default threshold of 3.3×10²² FLOPs applies. There are 3 applicable scenarios and thresholds to consider: The Fine-Tuning FLOPs Meter automatically determines which scenario applies based on whether you provide the PRETRAIN_FLOPS environment variable. To help you quickly determine which threshold path applies, use the following decision flow: Step 1: Do you know your base model’s pretraining FLOPs? - No: Proceed directly to the Default Threshold of 3.3×10²² FLOPs. - Yes: Move to the next evaluation step. Step 2: Evaluate pretraining compute scale If you know your pretraining compute, compare it against the following orders of magnitude: - Is pretraining compute ≥ 1025 FLOPs? - Yes: You fall under the Systemic Risk Threshold. Use a threshold of 3.3×1024 FLOPs. - No: Move to the next question. - Is pretraining compute ≥ 10²³ FLOPs? - Yes: Use a Relative Threshold of 30% of actual pretraining compute. - No: Proceed to the…